LangGraph: A Framework for Building Deterministic, Stateful LLM Applications

Large Language Models have forced software engineers to rethink how computation, reasoning and workflows are designed. While early LLM applications focused heavily on prompt engineering and conversational interfaces, real-world systems quickly exposed the limitations of treating LLMs as stateless text generators.

LangGraph emerges as a response to this architectural gap. It does not attempt to make models more intelligent, instead it provides a formal structure for controlling intelligence. At its heart, LangGraph is a framework for transforming probabilistic language models into systems with explicit control flow and observable state.

What LangGraph Is (and Isn’t)

Before diving deeper, it’s important to calibrate expectations:

LangGraph is:

- A graph/state machine orchestration model for multi-step LLM workflows

- A framework for explicit state management and deterministic control flow

- A system for making LLM application behavior observable and reproducible

LangGraph isn’t:

- A magic reasoning engine that makes models smarter

- A replacement for good prompt engineering or model quality

- A guarantee of output correctness (models remain probabilistic)

Understanding Determinism in LLM Systems

When we say “deterministic,” we don’t mean identical outputs every time that’s impossible with probabilistic models. Instead, we mean:

Deterministic control flow: Allowed transitions between steps are explicit, bounded and can be enumerated at design time.

Reproducible execution trace: Given the same inputs, model version, tool versions and state checkpoints, you can replay a comparable run and understand why decisions were made.

Non-deterministic reasoning remains inside nodes: The model’s outputs may vary, but the execution topology does not.

This distinction is critical. LangGraph doesn’t eliminate stochasticity it contains it within a predictable structure.

The Fundamental Problem with Modern LLM Applications

The rapid adoption of LLMs has created a false sense of progress in system design. Many applications appear functional on the surface, yet become brittle under moderate complexity. This failure is not due to model limitations, but rather the absence of formal structure around how models are invoked, how decisions are made and how intermediate results are preserved.

Most LLM-based systems today are constructed using one of three approaches:

- Prompt chaining

- Tool-augmented agents

- Conversation-driven state management

Each approach works for small-scale experimentation, but all suffer from structural weaknesses when systems grow in complexity.

1. The Illusion of Stateless Intelligence

LLMs are inherently stateless. Every interaction is a probabilistic function of the input text. When developers attempt to simulate memory, they do so by re-injecting prior outputs into future prompts. This approach has serious drawbacks:

- Memory becomes unbounded

- Context windows are finite

- Logical state is mixed with natural language

- Debugging becomes non-local and non-reproducible

From a systems perspective, this is equivalent to encoding application state inside free-form text, a red flag in systems that require auditability and change control.

2. Linear Chains Do Not Reflect Real Computation

Prompt chains assume a strictly linear execution model:

Input → Step A → Step B → Output

However, real-world workflows rarely behave this way. They require:

- Branching based on conditions

- Iterative refinement

- Validation and retries

- Early termination

- Human intervention

Without a formal control flow model, these behaviors are either hacked together or delegated entirely to autonomous agents introducing unpredictability.

3. Agent Autonomy Without Constraints

Agents are often presented as a solution to complex reasoning. In practice, unconstrained agents introduce reliability challenges:

- Non-deterministic execution paths

- Difficulty in guaranteeing termination

- Unbounded tool usage

- Limited observability

From a systems perspective, unconstrained agents behave more like black boxes than reliable services.

However, agents aren’t inherently problematic—they just need explicit guardrails in production systems:

- Tool allowlists with schema validation

- Budgeted steps, tool calls, and token limits

- Timeouts and termination conditions

- Policy checks (e.g., PHI redaction, safety gates)

- Human-in-the-loop nodes for escalation

Autonomy is fine—but it must be budgeted, policy-gated and observable in regulated production systems.

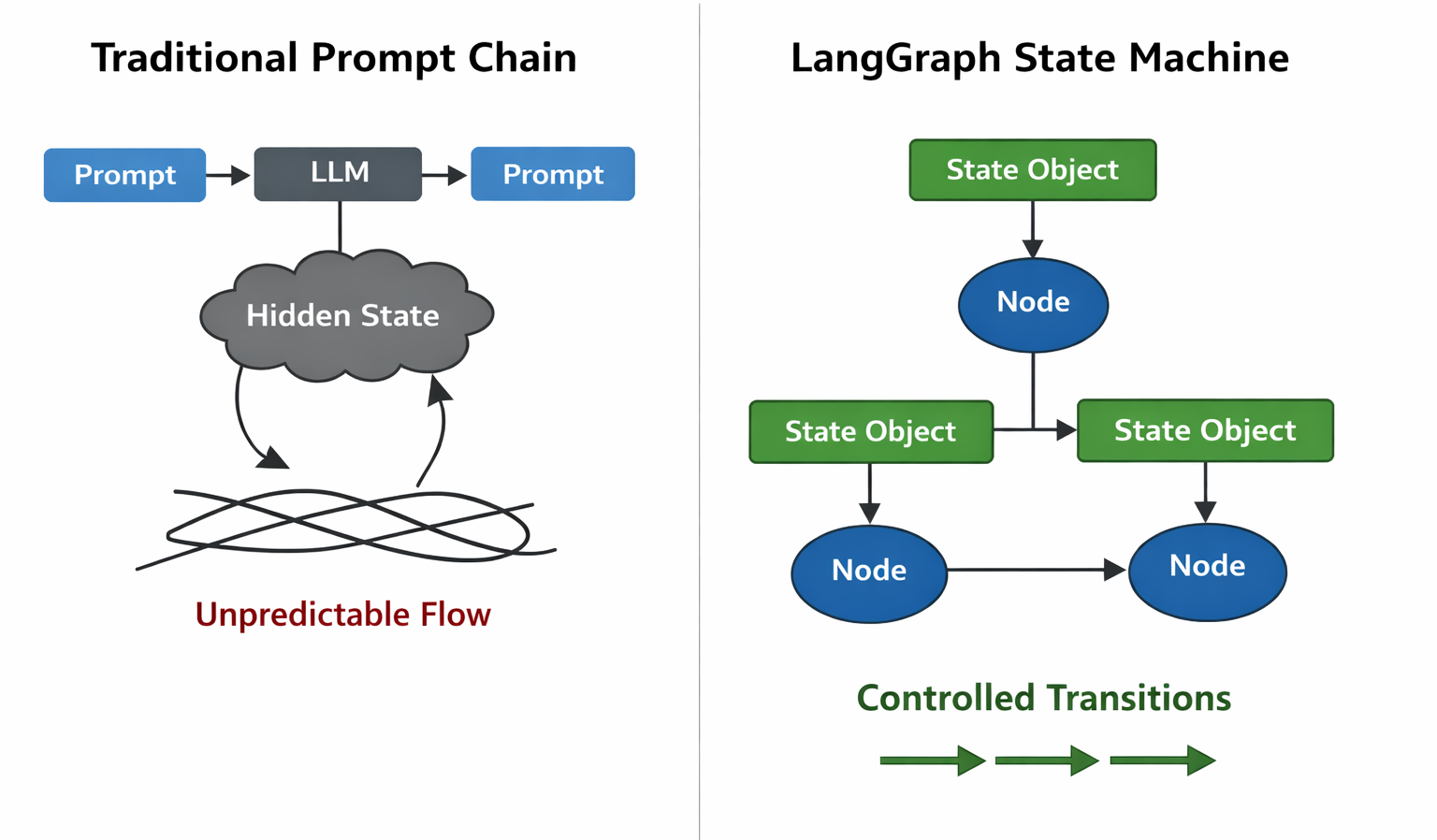

LangGraph as a Conceptual Shift

LangGraph should be understood not as an extension of prompt engineering, but as a corrective measure to an architectural mismatch. LLMs are probabilistic, non-deterministic components, yet they are increasingly embedded in systems that demand predictability, traceability and control. Without a governing framework, these requirements are fundamentally incompatible.

LangGraph reframes LLM applications as state machines rather than conversations.

This shift is subtle but profound. In classical computer science, state machines provide:

- Formal guarantees

- Explicit transitions

- Predictable execution

- Clear failure modes

LangGraph borrows these principles and applies them to LLM orchestration.

Graph Theory as an Execution Model

At its core, LangGraph models an AI workflow as a directed graph:

- Vertices (nodes) represent computational units

- Edges represent control flow

- Conditions determine transitions

- Cycles enable iteration

This is not merely an implementation detail, it is the core abstraction.

Unlike chains, graphs allow:

- Multiple possible futures from the same state

- Convergence of different execution paths

- Controlled recursion and looping

- Branching based on runtime conditions

From a graph theory perspective, this provides bounded non-determinism: execution paths may vary, but they remain within an explicitly designed topology. While the behavior space can still grow via loops and retries, the structure itself is known and auditable.

State as a First-Class Citizen

One of LangGraph’s most important contributions is its treatment of state as a structured, evolving data object rather than a conversational artifact.

Structured State vs Linguistic Memory

Traditional LLM systems blur the line between:

- Application state

- Reasoning trace

- User facing output

LangGraph enforces separation:

- State is structured (typed, explicit fields)

- Reasoning is internal (happens inside nodes)

- Output is derived (from final state)

This mirrors established principles in software architecture such as:

- Separation of concerns

- Explicit data contracts

- Deterministic state transitions

This design allows LLM systems to behave more like pure functions over state, even when the underlying model remains probabilistic.

Example: PHI-Safe State Design in Healthcare

In regulated domains like healthcare, state design directly impacts compliance. Consider this structure:

from typing import TypedDict, Any

class HealthcareAgentState(TypedDict):

raw_input: str

sanitized_input: str

phi_detected: bool

audit_log: list[dict[str, Any]]

decision_trace: list[str]

response: str

escalation_required: bool

Key design choices:

- Separate

raw_inputfromsanitized_inputto ensure PHI isn’t propagated audit_logtracks every decision with timestamps for compliancedecision_traceprovides human-readable explanation of reasoningescalation_requiredflag enables human-in-the-loop intervention- Logs stored separately with retention policies that respect data governance

This isn’t just good practice, it’s how you make LLM systems auditable in regulated environments.

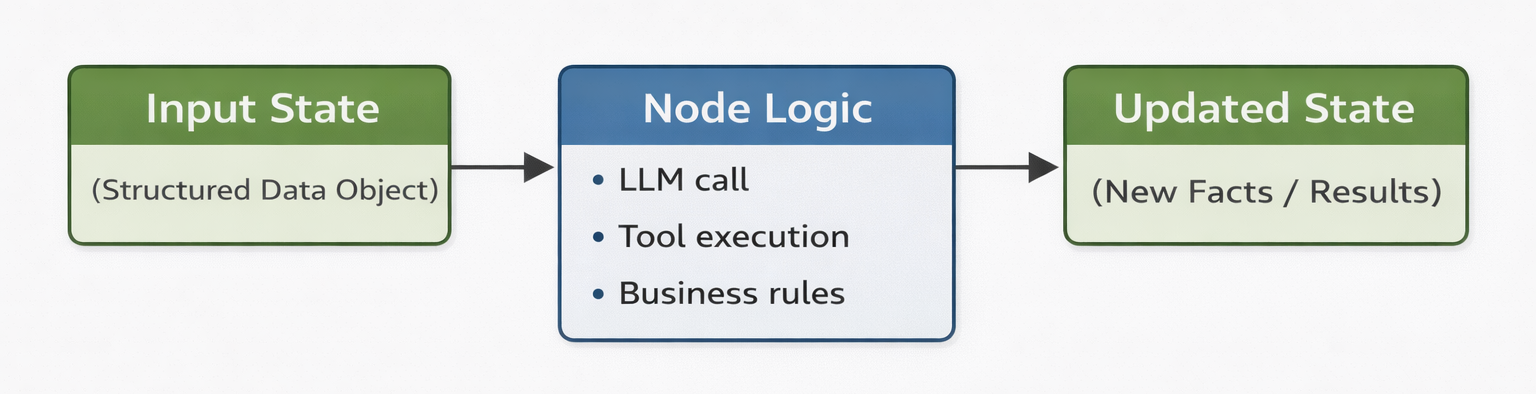

Nodes as Bounded Reasoning Units

In LangGraph, nodes are not “AI thoughts.” They are explicit computational steps.

Each node:

- Consumes state

- Performs a bounded operation

- Produces a state update

This model encourages:

- Idempotency (safe to retry)

- Reproducibility (same state in → comparable behavior)

- Observability (clear input/output contracts)

By decomposing intelligence into smaller, purpose-driven nodes, LangGraph aligns with modular system design principles. Each node can be reasoned about independently, tested in isolation and replaced without affecting the entire system.

This modularity reduces system complexity. Problems still exist, but they are localized rather than global.

Control Flow as a First-Class Concern

Control flow in LangGraph is explicit, not inferred.

This has major implications:

- Execution paths can be reasoned about statically

- Failure cases can be enumerated

- Retries can be bounded

- Infinite loops can be prevented

Instead of asking an agent “What should I do next?”, LangGraph asks:

“Given this state, what transitions are allowed?”

This distinction transforms LLM orchestration from heuristic decision making into rule governed execution, similar to how safety critical systems are built. Intelligence informs decisions, but does not execute them autonomously.

Failure Modes and Safe Termination

LangGraph’s biggest production value isn’t just graphs, it’s enumerable failure modes and bounded recovery strategies.

Common Failure Scenarios

Every production LLM system must handle:

- Tool failure (API timeout, rate limit, invalid response)

- Retrieval failure (no relevant documents found)

- Low-confidence output (model uncertainty, hallucination risk)

- Policy violation (inappropriate content, PHI leak)

- Timeout (computation exceeds budget)

Explicit Failure Handling

In LangGraph, each failure maps to explicit transitions:

# Conceptual illustration

def add_conditional_edges_with_fallback(graph, node_name):

graph.add_conditional_edges(

node_name,

route_based_on_state,

{

"success": "next_step",

"retry": node_name, # Bounded retry

"fallback": "safe_response", # Degraded mode

"escalate": "human_review", # Human intervention

"terminate": END # Safe exit

}

)

This design enables:

- Graceful degradation instead of catastrophic failure

- Budget enforcement (max 3 retries, then escalate)

- Audit trails for compliance teams

- Reproducible failure analysis

Observability: State Transitions as First-Class Telemetry

In production systems, instrumentation is architecture.

What to Measure

Don’t just say “we have observability.” Instrument:

- Transition counts (which paths are taken most often?)

- Loop iterations (are we stuck in refinement cycles?)

- Retry reasons (which tools fail most?)

- Node latency distributions (where are bottlenecks?)

- Policy gate blocks (what’s being caught by safety checks?)

- Escalations per 1k requests (when do humans intervene?)

Implementation Pattern

# Conceptual illustration of instrumented node

def process_with_telemetry(state: AgentState) -> dict[str, Any]:

start_time = time.time()

try:

result = process_logic(state)

metrics.increment("node.process.success")

metrics.timing("node.process.duration", time.time() - start_time)

return {"output": result, "status": "success"}

except ToolError as e:

metrics.increment("node.process.tool_failure")

logger.error(f"Tool failed: {e}", extra={"state_id": state["request_id"]})

return {"status": "retry", "error": str(e)}

Staff engineers respect systems where telemetry is baked into the architecture, not bolted on later.

Cycles, Feedback, and Convergence

Many AI tasks require iterative refinement:

- Validation

- Error correction

- Confidence based retries

LangGraph supports cycles while maintaining safety through explicit exit conditions.

Controlled Convergence

# Conceptual illustration

def should_continue(state: AgentState) -> str:

if state["iteration_count"] >= 3:

return "terminate" # Prevent infinite loops

if state["confidence"] > 0.9:

return "finalize"

return "refine"

graph.add_conditional_edges(

"validation_node",

should_continue,

{

"refine": "improvement_node",

"finalize": "output_node",

"terminate": END

}

)

This introduces the concept of controlled convergence: the system is allowed to revisit states, but only under predefined rules. This ensures progress without drift and improvement without runaway behavior.

Building a LangGraph Application: Conceptual Example

Here’s a simplified healthcare assistant that demonstrates key principles:

# Conceptual pseudocode for illustration

from typing import TypedDict, Any

class HealthcareAgentState(TypedDict):

query: str

sanitized_query: str

phi_detected: bool

retrieved_docs: list[dict[str, Any]]

response: str

confidence: float

iteration_count: int

audit_log: list[dict[str, Any]]

status: str

def sanitize_input(state: HealthcareAgentState) -> dict[str, Any]:

"""Remove PHI before processing"""

sanitized, phi_found = remove_phi(state["query"])

audit_entry = {

"step": "sanitization",

"phi_detected": phi_found,

"timestamp": now()

}

return {

"sanitized_query": sanitized,

"phi_detected": phi_found,

"audit_log": state["audit_log"] + [audit_entry]

}

def retrieve_knowledge(state: HealthcareAgentState) -> dict[str, Any]:

"""Fetch relevant medical guidelines"""

docs = search_medical_db(state["sanitized_query"])

return {

"retrieved_docs": docs,

"status": "retrieved" if docs else "no_results"

}

def generate_response(state: HealthcareAgentState) -> dict[str, Any]:

"""Generate response with confidence scoring"""

response, confidence = llm_generate(

query=state["sanitized_query"],

context=state["retrieved_docs"]

)

return {

"response": response,

"confidence": confidence,

"iteration_count": state["iteration_count"] + 1

}

def should_refine(state: HealthcareAgentState) -> str:

"""Routing logic based on state"""

if state["iteration_count"] >= 3:

return "escalate"

if state["confidence"] < 0.7:

return "refine"

if state["phi_detected"]:

return "escalate" # Human review required

return "finalize"

# Build the graph

from langgraph.graph import StateGraph, END

workflow = StateGraph(HealthcareAgentState)

workflow.add_node("sanitize", sanitize_input)

workflow.add_node("retrieve", retrieve_knowledge)

workflow.add_node("generate", generate_response)

workflow.add_node("human_review", escalate_to_human)

workflow.set_entry_point("sanitize")

workflow.add_edge("sanitize", "retrieve")

workflow.add_edge("retrieve", "generate")

workflow.add_conditional_edges(

"generate",

should_refine,

{

"refine": "generate", # Loop back for improvement

"escalate": "human_review",

"finalize": END

}

)

workflow.add_edge("human_review", END)

app = workflow.compile()

Key architectural decisions:

- PHI sanitization happens first (compliance by design)

- Confidence thresholds trigger refinement (quality control)

- Iteration budgets prevent runaway loops (termination guarantee)

- Low confidence or PHI detection escalates to humans (safety net)

- Every decision is logged (audit trail for regulators)

When LangGraph Becomes Necessary

LangGraph is not required for:

- Simple text generation

- Single step inference

- Casual experimentation

- Prototypes with no production intent

It becomes necessary when:

- AI workflows exceed trivial complexity (>3 steps)

- State must persist across steps

- Execution paths must be auditable

- Systems must fail safely and predictably

- Regulatory compliance requires traceability

- Multiple models or tools must be orchestrated

- Human-in-the-loop intervention is needed

In other words: when AI stops being a demo and starts being infrastructure.

Determinism as a Design Philosophy

LangGraph embodies a philosophical stance that is often uncomfortable in AI discourse: creativity must be subordinated to control in production systems.

While LLMs thrive in open ended environments, real systems require guarantees. LangGraph accepts the stochastic nature of models but isolates it within deterministic boundaries. The result is not less intelligence, but disciplined intelligence.

This distinction is what allows AI to move from experimentation to infrastructure.

LangGraph in the Broader Evolution of Software Systems

Historically, every major computing paradigm has undergone similar evolution. Early systems prioritize flexibility and speed of development. Over time, structure, abstraction, and formalism become necessary for scale and reliability.

LangGraph represents this maturation phase for LLM systems. It borrows from decades of research in:

- Distributed systems

- Workflow orchestration

- State machines

- Formal verification

…and applies those lessons to modern AI workloads.

This progression is not accidental, it reflects the natural lifecycle of production systems.

A Critical Perspective

LangGraph is not a silver bullet.

It introduces:

- Architectural overhead (you must design graphs)

- Cognitive complexity (teams must think in state machines)

- Design responsibility (decisions about control flow are explicit)

However, these costs mirror the evolution of every serious computing paradigm. Early simplicity gives way to structured discipline as systems mature. The alternative unstructured agents with implicit state does not scale to production requirements.

In regulated industries especially, the cost of informality far exceeds the cost of explicit design.

Getting Started with LangGraph

Installation

pip install langgraph

Core Concepts to Master

- State definition (TypedDict with explicit fields)

- Node functions (state → partial state updates)

- Graph construction (add_node, add_edge, add_conditional_edges)

- Conditional routing (explicit transition logic)

- Checkpointing (state persistence for long-running workflows)

Best Practices

- Design state schema first (before writing any nodes)

- Keep nodes focused (single responsibility)

- Make transitions explicit (no hidden control flow)

- Add telemetry early (instrument state transitions)

- Test failure paths (not just happy paths)

- Document routing logic (make decisions traceable)

Conclusion: Engineering Over Improvisation

LangGraph signals a transition away from improvisational AI development toward engineered systems that can be reasoned about formally.

It does not attempt to solve intelligence itself. Instead, it solves the problem of containing intelligence within reliable structures. In doing so, it enables LLMs to participate in serious software systems without undermining their integrity.

The future of AI in production will not belong to the most creative prompts, but to the most rigorously designed architectures. LangGraph provides the foundation for that future not by making models smarter, but by making systems more disciplined.

For staff and principal engineers tasked with making LLMs production-ready, LangGraph offers something rare: a path from research enthusiasm to operational reliability.